Experiments suggest AI implementation without AI education ‘leads to increasing human stupidity’

Asked to identify which of six fictional persons is most likely to be a terrorist, 85% of 1,500 participants in a psychology experiment selected one of the least likely suspects.

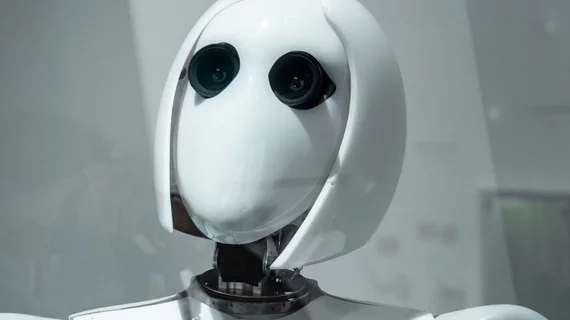

And they did so specifically because they’d seen an AI robot make the ridiculous choice first.

Those who did not first observe the AI in action made more reasonable selections.

Based on these and other results from this and another experiment, lead researcher Michal Klichowski, PhD, of Adam Mickiewicz University in Poland arrived at two conclusions.

First, people trust AI. “Its choice can make absolutely no sense, and yet people assume that it is wiser than they are (as a certain form of collective intelligence),” Klichowski comments. Most people seem susceptible to this effect, he adds, “and in the future it will have even greater impact because the programmed components of intelligent machine operation have started to be expressly designed to calibrate user trust in AI.”

Second, developing AI without educating people about its limitations as well as its potential “leads to increasing human stupidity,” Klichowski notes, citing prior research.

And this troubling phenomenon, he warns, could be “driving us toward a dystopian future of society characterized by widespread obedience to machines.”

Klichowski’s in-depth description of this work is running in Frontiers in Psychology.

“[I]f we truly want to improve our society through AI so that AI can enhance human decision making, human judgment and human action, it is important to develop not only AI but also standards on how to use AI to make critical decisions, e.g., related to medical diagnosis, and, above all, programs that will educate the society about AI and increase social awareness on how AI works, what its capabilities are and when its opinions may be useful,” he concludes.

“Otherwise, as our results show, many people, often in very critical situations, will copy the decisions or opinions of AI, even those that are unambiguously wrong or false … and implement them.”

The journal has posted the paper in full for free.